How Ancestry Used AI to Unlock a New Customer Segment

The AI playbook that turned Ancestry's biggest conversion gap into a growth engine.

👋 Hi, it’s Gaurav and Kunal, and welcome to the Insider Growth Group newsletter, our bi-weekly deep dive into the hidden playbooks behind tech’s fastest-growing companies.

Our mission is simple: We help you create a roadmap that boosts your key metrics, whether you're launching a product from scratch or scaling an existing one.

What We Stand For

Actionable Insights: Our content is a no-fluff, practical blueprint you can implement today, featuring real-world examples of what works—and what doesn’t.

Vetted Expertise: We rely on insights from seasoned professionals who truly understand what it takes to scale a business.

Community Learning: Join our network of builders, sharers, and doers to exchange experiences, compare growth tactics, and level up together.

Before We Dive In: Key Terms

A few terms come up repeatedly in this article.

Here is what they mean:

Genealogy - The study of family history and lineage. Genealogists trace who their ancestors were, where they came from, when they were born and died, and how family lines connect across generations. In practice this means hunting through historical documents like census records, immigration files, birth certificates, and military registrations to piece together your family’s story going back decades or centuries.

Genealogy expertise - The practical skills required to do that research successfully: knowing how to read and interpret old handwritten records, searching for ancestors whose names were spelled differently across documents, navigating conflicting information across multiple sources, and following family lines through migration patterns and name changes.

The Growth Lever Most Product Teams Overlook

There is a problem hiding inside many of the best consumer products today: they work beautifully for users who already know how to use them. The harder question is what happens to the millions of people who arrive curious but lack the expertise to get value out of it.

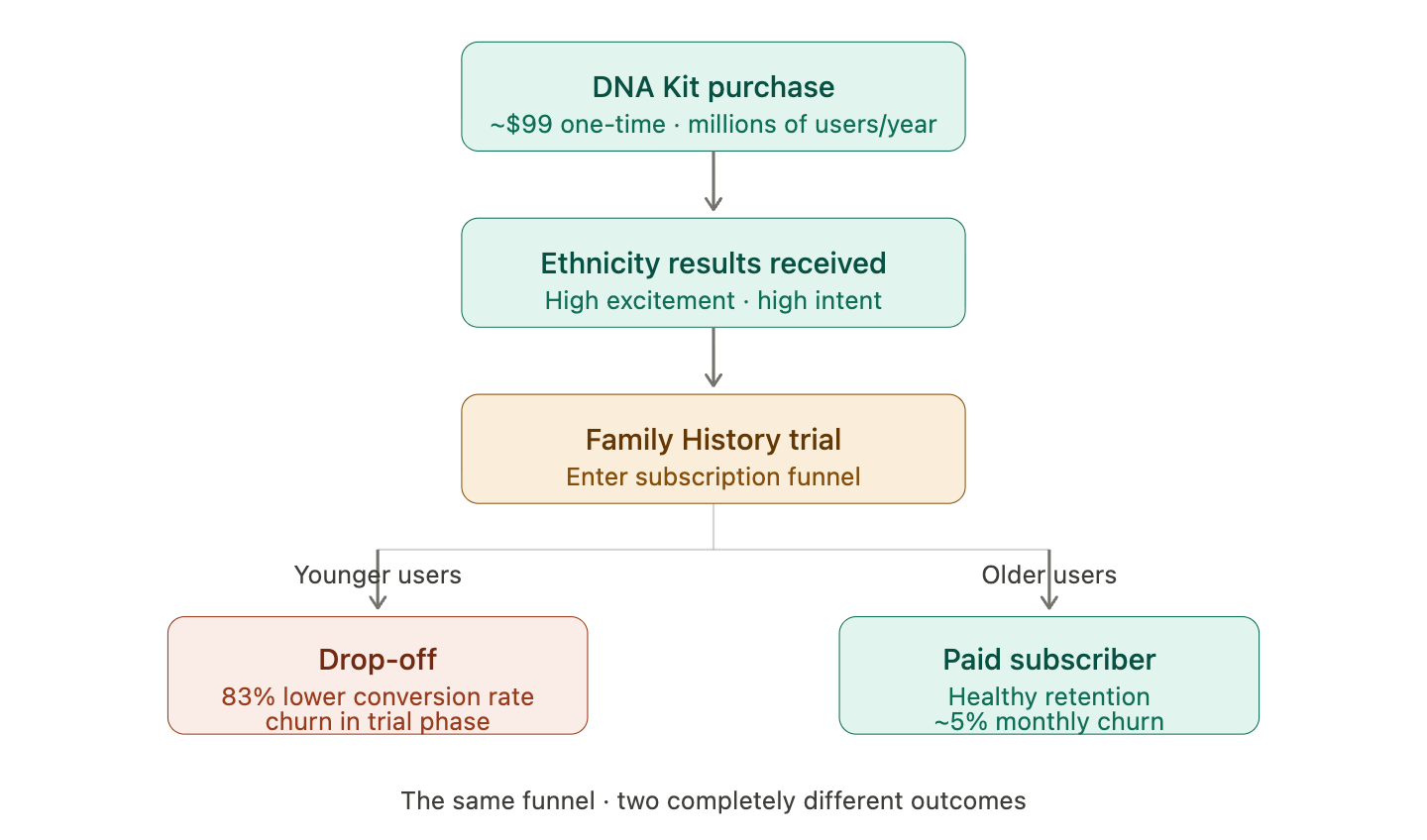

That question sat at the center of a major growth challenge at Ancestry. Every year, millions of people purchased an Ancestry DNA Kit, a simple saliva test that returns your ethnicity breakdown and connects you with genetic relatives. It is a delightful, shareable experience that resonated deeply with younger adults. After receiving their DNA results, many of these users entered the Family History subscription funnel, where Ancestry invited them to go deeper and explore historical records to trace their lineage.

The Family History subscription is where Ancestry’s core revenue lives. It gives subscribers access to billions of historical documents: census records, immigration files, vital records, military registrations, and more. For older users who had spent years building genealogy expertise, the product performed well, with healthy single-digit monthly churn after four or more months.

But for younger users arriving through the DNA funnel, something was not working. Their conversion rate from DNA Kit user to paying Family History subscriber was 83% lower than that of older, experienced users. Conversion rate here means the percentage of DNA users who entered the subscription trial and went on to become active paying subscribers.

With millions of DNA kit users entering this funnel every year, even a fractional improvement in that conversion rate would translate into tens of millions of dollars in incremental annual revenue. The opportunity was clear. The real question was why younger users were struggling so much and what could actually be done about it.

The counterintuitive part: younger users were not less motivated. They were often more excited after receiving their DNA results than older users who came in through organic genealogy interest. The problem was not enthusiasm - it was genealogy expertise. Ancestry had built a product that rewarded it but never taught it.

Today we break down the two-part playbook Ramesh and his team used to close that gap: an AI co-pilot that replaced the need for genealogy expertise, and a ground-up rebuilt search experience designed specifically for a new generation of users.

This post is written for product managers and founders who have a product with a meaningful learning curve, where the users who succeed tend to already bring relevant experience to the table. If you have ever looked at your conversion data and noticed that a newer or younger audience is underperforming relative to your core base, and you are not entirely sure why, this story will feel familiar.

Introducing Ramesh Krishnamurthy

Ramesh Krishnamurthy is a AI Product Lead at Meta with a career spanning some of the most product-forward companies in tech. At Ancestry, Ramesh led the Search & AI Products with the goal of evolving the genealogy subscription product to appeal to a broader audience, specifically younger adults. His mandate was to find out why younger users were not converting, and build the product interventions to fix it. Before Ancestry, he built products at Amazon and Glassdoor, developing a deep foundation in consumer product design, data-driven experimentation, and AI product development at scale.

Understanding Ancestry’s Two-Product Growth Challenge

To understand why this problem existed, it helps to understand how Ancestry’s two core products relate to each other.

The DNA Kit is a one-time purchase, typically around $99. You mail in a saliva sample and within a few weeks receive a detailed ethnicity breakdown showing your genetic origins by region, plus a list of genetic relatives who have also taken the test. The experience is immediate, visual, and highly shareable on social media. It consistently resonated with younger adults who were curious about their heritage but had no prior interest in genealogy research.

The Family History subscription costs ~ $40 per month and gives access to billions of historical documents. This is where the deep research happens: tracing your family tree back multiple generations, finding immigration records, reading century-old census entries, and piecing together the story of where your family came from. Traditionally, this product resonated most strongly with older adults who had spent years or decades developing genealogy expertise.

The natural growth engine Ancestry had built was to use the DNA Kit as an acquisition channel that fed curious new users into the Family History subscription. The emotional hook of seeing your ethnicity results would ideally translate into a desire to learn more, and the subscription would be how you scratched that itch.

For older users, this worked well. For younger users coming in through the DNA funnel, the gap between what they arrived expecting and what the product actually required of them was significant enough that most did not make it through.

The older cohort that converted well had not learned genealogy from Ancestry either. They had learned it elsewhere (through relatives and Youtube videos), years before ever signing up, and were arriving as already-competent users. Ancestry had been quietly benefiting from an invisible pipeline of pre-educated customers without ever realizing it. When that pipeline stopped being the primary acquisition source, the underlying product gap became hard to ignore.

CASE STUDY #1: Building the AI Co-Pilot That Replaced Your Expert Friend

How Ancestry built an AI assistant that acted like having a knowledgeable friend or family member sitting next to you, and drove 40% higher repeat visits plus a 17bps lift in subscription conversion.

The Discovery: What User Research Actually Revealed

When the team started digging into why younger users were not converting, the initial hypothesis was that the product was simply too complex. But complexity alone is not an actionable diagnosis. Ramesh and the team needed to understand what specifically was breaking down and when.

What emerged from that research was a pattern that changed how the team thought about the entire problem. Successful users, the ones who converted and stayed, had almost universally built genealogy expertise before they arrived at Ancestry. Not through the product itself, but through outside channels: a genealogist friend or family member who had walked them through how to interpret records, YouTube tutorials that explained census data, genealogy blogs that decoded the vocabulary of historical research. They had done the equivalent of taking a course before using the software.

Younger users had done none of this. They arrived curious and emotionally engaged after seeing their DNA results, but they had no frame of reference for what they were looking at inside the product. When they encountered their first census record and saw a handwritten entry with an unfamiliar abbreviation, they had no one to ask. When a search returned no results because they had spelled a name the modern way rather than the historical variant, they had no way to know that was the problem. When two records showed conflicting birth years for the same person, they had no framework for deciding which one to trust.

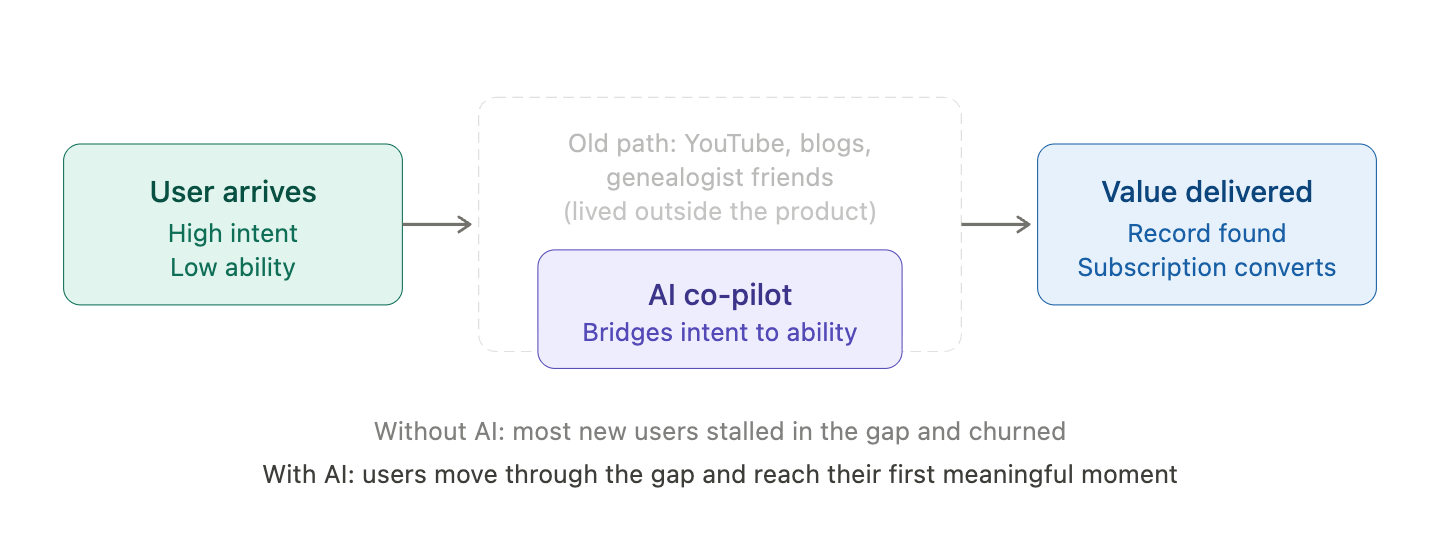

The aha moment from research: The team was not looking for a feature gap or a UX flaw. What they found was something more fundamental: the product’s entire value delivery mechanism depended on skills that lived outside the product, in YouTube videos and genealogist friends. Every user who succeeded had essentially onboarded themselves through a parallel, entirely invisible curriculum. Ancestry had never built an easy to digest curriculum. They had just benefited from users who consumed it off their platform.

The research surfaced three specific capability gaps: interpreting historical records, tracing relationships across generations, and verifying conflicting sources when documents disagreed.

But the deeper insight was not just that younger users lacked these skills. It was that they did not particularly want to develop them.

For older users, genealogy was a hobby. The research process itself was the reward - the hours spent hunting through records, the satisfaction of piecing together a family line, the sense of connection it created. In one user research session, a customer captured it perfectly: “Genealogy is the only thing I can talk to my dad about without us arguing.” The process had meaning independent of the outcome.

For younger users, that was not the job to be done. They were excited about their family history. They were not excited about becoming genealogists. They wanted the discovery - the moment of finding out where their family came from, who their ancestors were, what their story was. They just did not want to spend months acquiring expertise to get there.

That distinction changed everything about how the team thought about the solution. The product did not need to teach younger users genealogy. It needed to remove the requirement that they learn it at all.

What They Built and How They Validated It

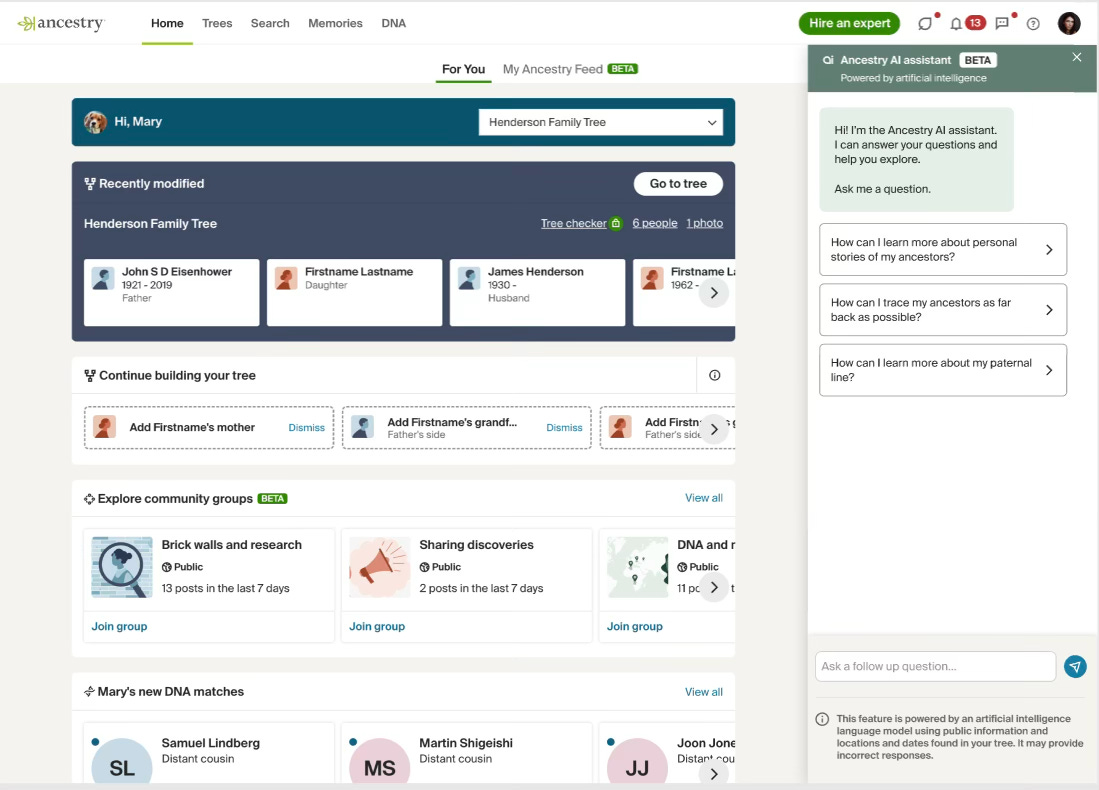

The team built an AI assistant trained on Ancestry’s knowledge base, historical records corpus, and genealogical methodology. It was integrated directly into the DNA customer journey, appearing at the moment of highest user curiosity: right after someone received their DNA results and was beginning to explore what they meant.

Users could now ask questions in natural language and receive answers grounded in real genealogical knowledge:

“How do I research my Irish ancestry?” would return guidance on the specific record types available for Ireland, the time periods most relevant to Irish immigration to the US, and which searches were most likely to yield results given that context

“How are me and Brenda Coffield related?” would trace the shared ancestor path and explain the relationship in plain language

“Where do my Norwegian genes come from?” would connect the ethnicity estimate to historical migration patterns and suggest which geographic regions of Norway might be most relevant to explore

The Ancestry AI assistant appears as a persistent panel on the right side of the homepage, surfacing suggested questions like “How can I trace my ancestors as far back as possible?”

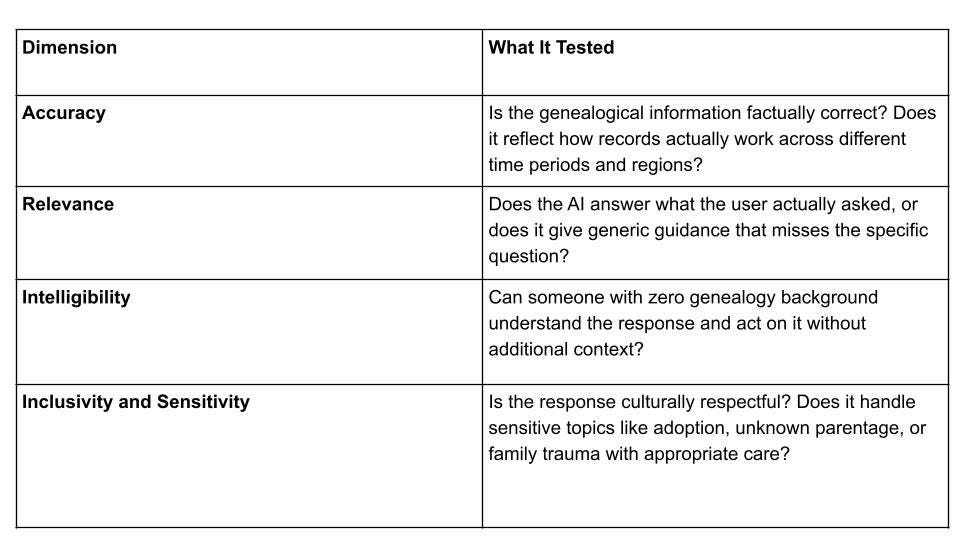

Before launching broadly, the team ran a structured validation process with Ancestry’s in-house genealogy experts. Every AI response was evaluated across four dimensions before the product went live:

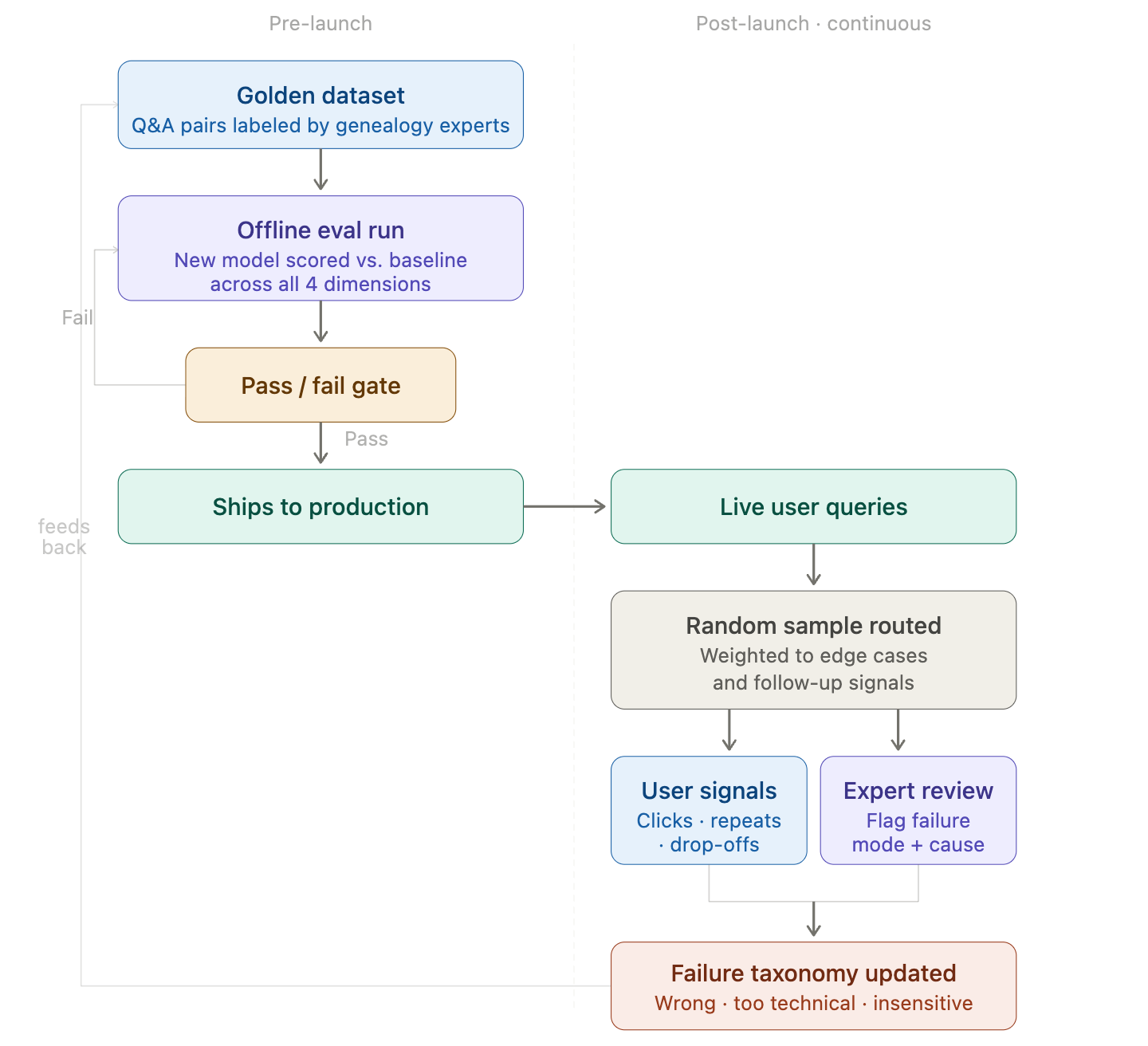

But the four dimensions were not just a pre-launch checklist. They became the foundation for an ongoing evaluation system because in a domain like genealogy, a single confidently wrong answer can destroy a user’s trust in everything that follows. If the AI tells someone their ancestor immigrated from the wrong country, or misidentifies a family relationship, the user does not just lose faith in that response. They lose faith in the product.

This is where evals became critical. The team needed a systematic way to continuously monitor AI response quality across the four dimensions at scale - not just sample-check it before launch. That meant building a feedback loop where flagged responses from real users fed back into the evaluation process, expert reviewers periodically audited live outputs, and the bar for accuracy was treated as a living standard rather than a one-time gate.

The broader principle for any team building AI on top of specialized knowledge: pre-launch expert review gets you to a trustworthy starting point, but it is the ongoing eval infrastructure that keeps you there. Without it, model updates, new record types, or edge cases that were not in your original test set will quietly erode the accuracy that trust was built on.

This expert review process was not just a quality gate. It was a trust-building mechanism. They were shipping something that users would rely on to understand real information about their real families. In high-stakes or emotionally meaningful domains, the user cannot fact-check you - which means the standard for accuracy has to be set by people who can, and maintained continuously long after launch.

Every model update is scored against expert-labeled Q&A pairs before it ships. Once live, real queries are continuously sampled, reviewed by both user behavior signals and genealogy experts, and fed back to raise the bar for the next release.

Impact

40% increase in repeat visits from DNA-funnel users who previously dropped off quickly after their first session

17 basis point increase in subscription conversion from DNA users into paying Family History subscribers

Users who engaged with the AI assistant spent meaningfully more time in the product and explored more records per session than those who did not

Key Learnings

AI’s highest-value use case in complex products is ability transfer, not automation. The AI co-pilot did not automate genealogy research. It transferred the ability to do research to users who previously could not. That is a different and often more valuable framing for where AI belongs in a product.

The expert friend model is a design target: When you identify that successful users benefited from having a knowledgeable person in their corner, that is a concrete product spec. The goal is to replicate that experience at scale.

If you’ve gotten this far, scroll to the bottom for the full 🔥 IGG Playbook on how to apply this framework to your own product.

CASE STUDY #2: Smart Search

The AI Co-Pilot Solved One Layer. A Second Layer Remained.

The AI assistant helped users understand what they were looking at and know what to search for next. That addressed the knowledge barrier. But understanding alone was not enough. Users still needed to act: to run a search, interpret a results page, and find a record that meant something to them.

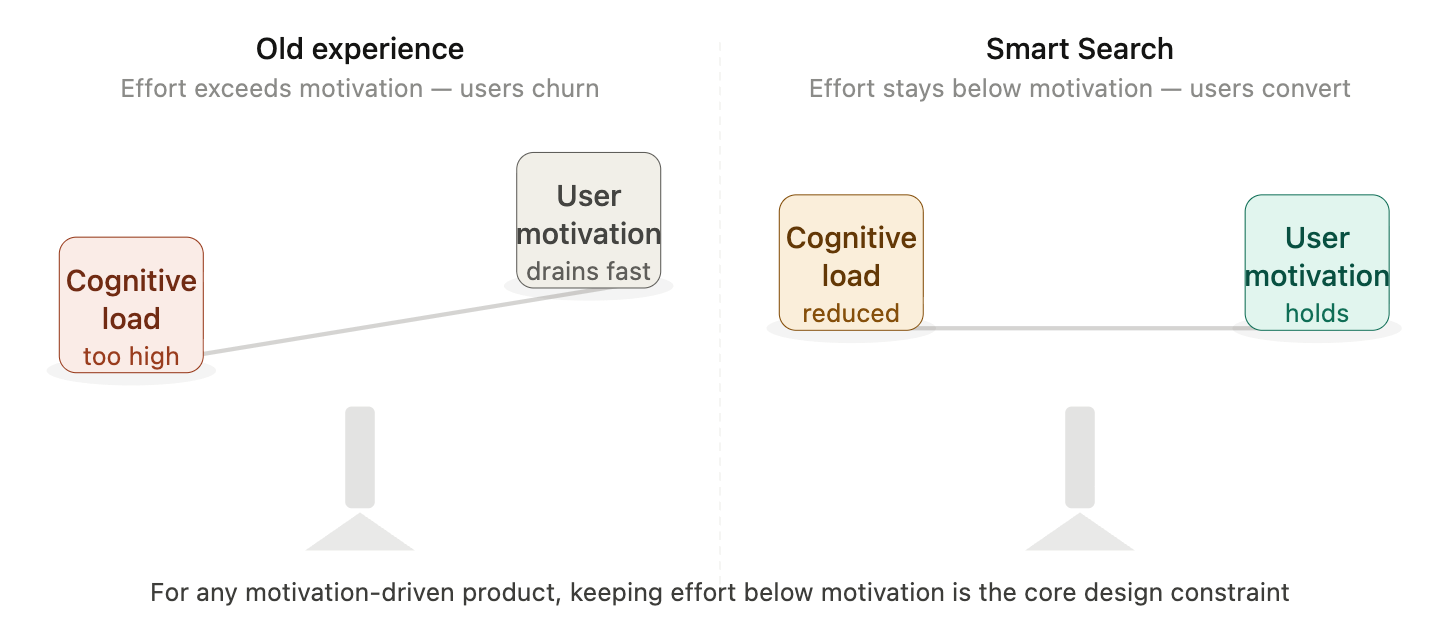

And that is where the team found a second, distinct failure mode. Even when users had the knowledge to run a search, the search experience itself was introducing friction that exceeded the motivation younger users arrived with.

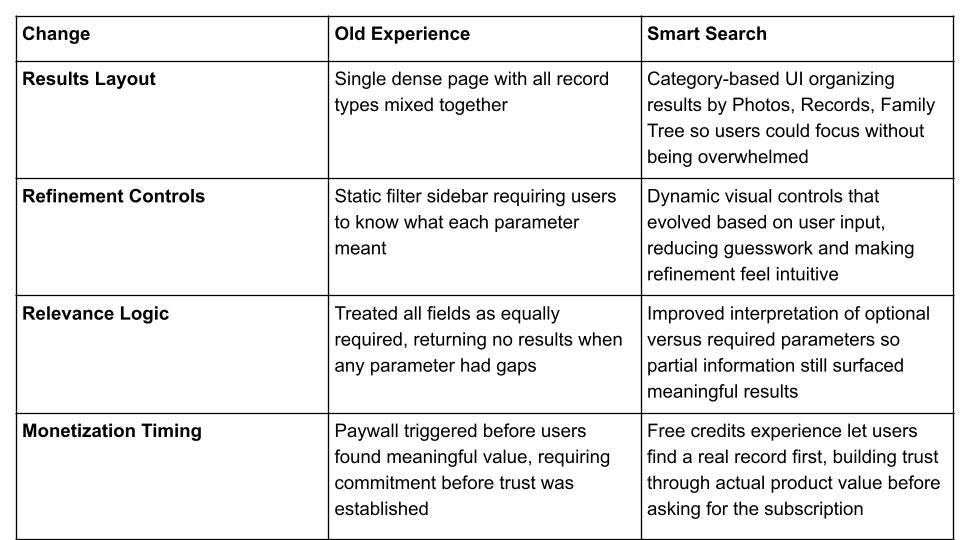

The key insight: Two separate products addressing the same symptom. The AI co-pilot and Smart Search were not redundant. They solved different layers of the same problem. If the team had shipped only the AI assistant without fixing search, users would have understood what to look for but still struggled to actually find it. Solving the knowledge layer without solving the execution layer would have left significant conversion lift on the table.

Why the Search Experience Was Working for One Audience and Not the Other

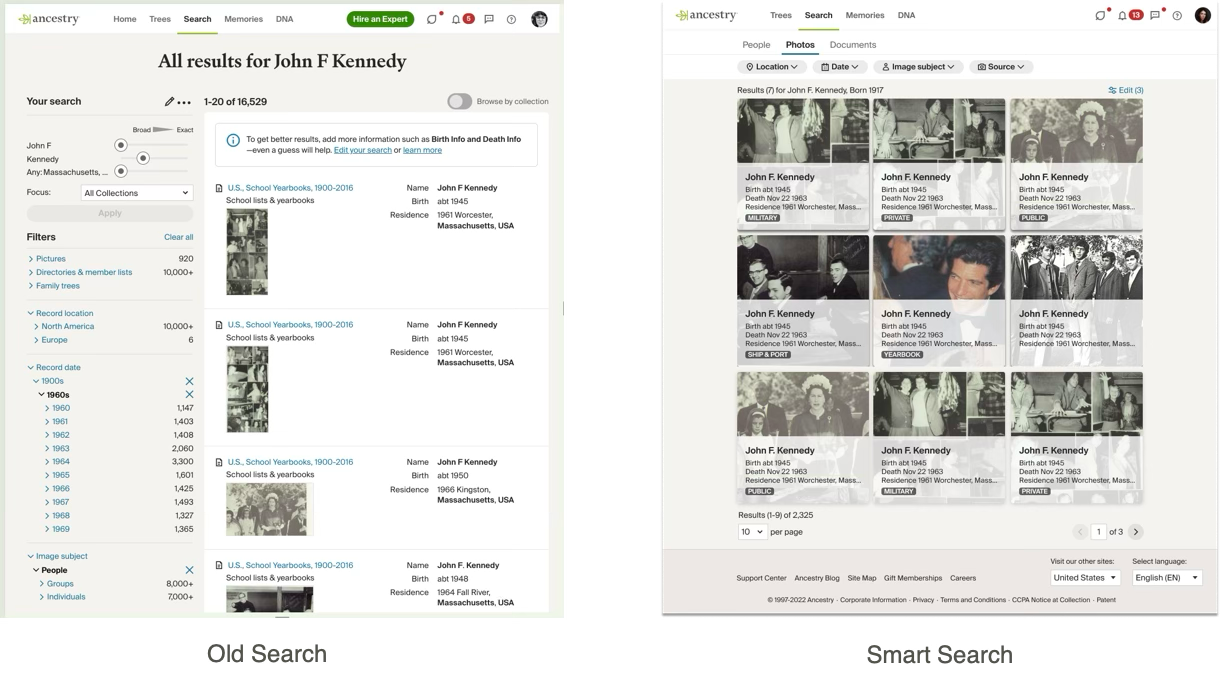

Genealogy search is not like searching on Google, where partial or imprecise queries still return useful results. It requires structuring queries with partial or uncertain information, interpreting results that span multiple records across decades, and refining searches iteratively when initial results come back empty.

The existing search experience had been built over years for users who knew exactly what they were doing. Expert genealogists loved it. It was powerful, deep, and flexible. But for a younger user on their first or second search, the interface presented more parameters and options than felt approachable.

The team had tried to address this through incremental changes before. Each attempt ran into the same tension: any improvement meaningful enough to help a new user felt like too much change to the core user base that had built their research workflows around the existing interface.

The two audiences needed fundamentally different things.

The team also explored whether search was even the right mechanism. An internal prototype tested a more proactive AI-driven experience where the co-pilot would surface results directly rather than waiting for users to query. It did not hold up. Genealogy discovery is inherently iterative - users need to compare multiple results and progressively narrow down and a conversational interface was not built for that kind of back-and-forth. Search, rebuilt for the right audience, remained the better foundation.

The Decision to Build a Parallel Experience Rather Than Redesign

Instead of trying to find a compromise that would serve both audiences reasonably well, the team made a more decisive call: build a separate search experience purpose-built for new users, and preserve the existing experience as an opt-in choice for the core audience.

The team focused the new Smart Search experience around a specific insight about new user behavior. Almost universally, the first thing a new Ancestry user searches for is themselves, their parents, or their grandparents. This is the moment of truth: the search that tells a user whether the product actually works for them. If that first search succeeds and delivers a real result, the user’s confidence in the product spikes. If it fails or returns an overwhelming page of undifferentiated records, they are unlikely to try again.

Every design decision in the new experience was oriented around maximizing the probability of a successful first search for this specific scenario.

Four Changes That Defined the Smart Search Experience

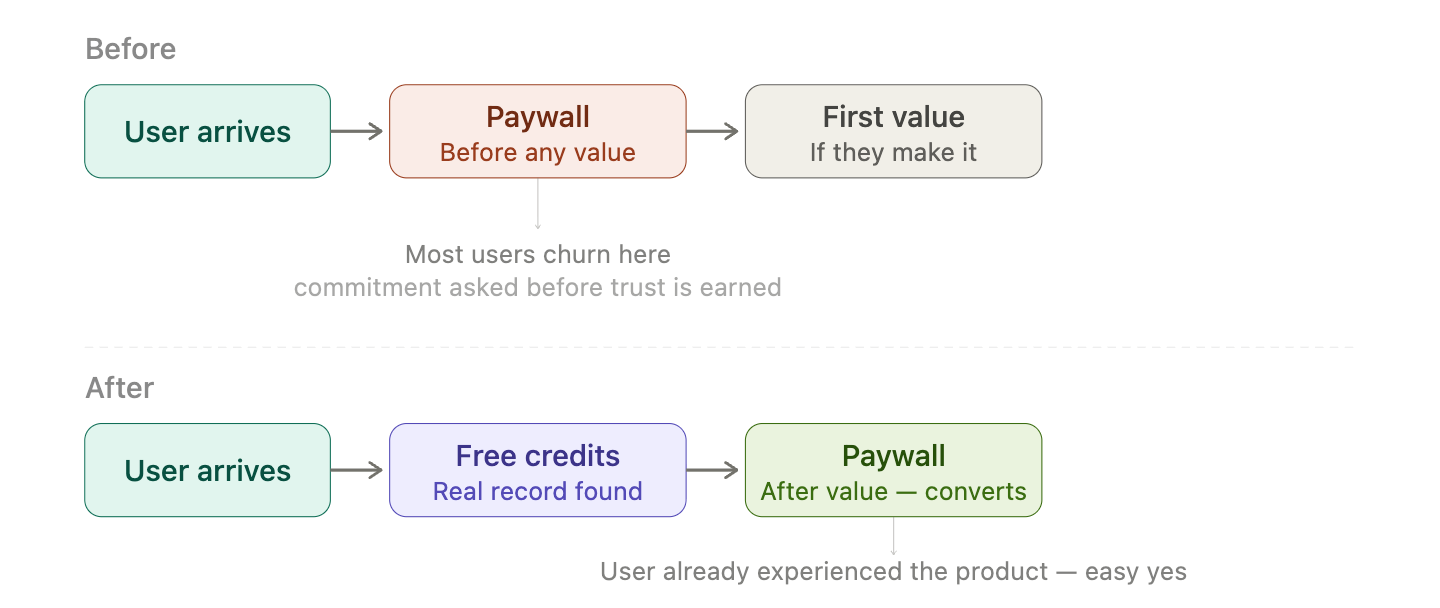

The Free Credits Decision: Letting the Product Earn the Commitment

New users received a small number of credits they could use to view actual historical records without starting a subscription. The intent was to let the product prove itself before asking for anything in return.

The value of an Ancestry record is not abstract. When a new user finds a census entry listing their great-grandmother’s name, age, and the address where she lived in 1920, something shifts. The subscription decision becomes straightforward. They have already experienced what they would be paying for. The paywall is not blocking the product anymore. It is the natural next step after the product has already delivered.

The lesson is not to give away your product. It is to sequence your friction so that commitment asks come after value delivery, not before. For products where the first meaningful outcome is emotional or discovery-driven, this sequencing can meaningfully change conversion rates.

Impact

25 basis point increase in subscription sign-ups from new users who went through the Smart Search experience

Higher engagement at every stage of the funnel: more users initiating searches, more engaging meaningfully with results, more reaching the paywall with enough context to convert

Zero disruption to the core expert user base, who retained access to the full-featured search experience as their default

Key Learnings

When one experience cannot serve two audiences well, the answer is two experiences. The opt-in mechanic for existing users is the key enabler. It protects the workflows your best users have built while giving you complete design freedom for the audience you are trying to grow.

Find the moment-of-truth action and optimize everything around it. For Ancestry, this was the first search a new user runs. Map the equivalent in your product: the first action that answers the question “does this actually work for me?” and treat that moment as the primary design target for your new user experience.

Sequence friction after value for emotionally-driven products. Products where the first meaningful outcome is emotional or discovery-driven should place their paywall after that outcome, not before it. Let the product demonstrate its value before asking for commitment.

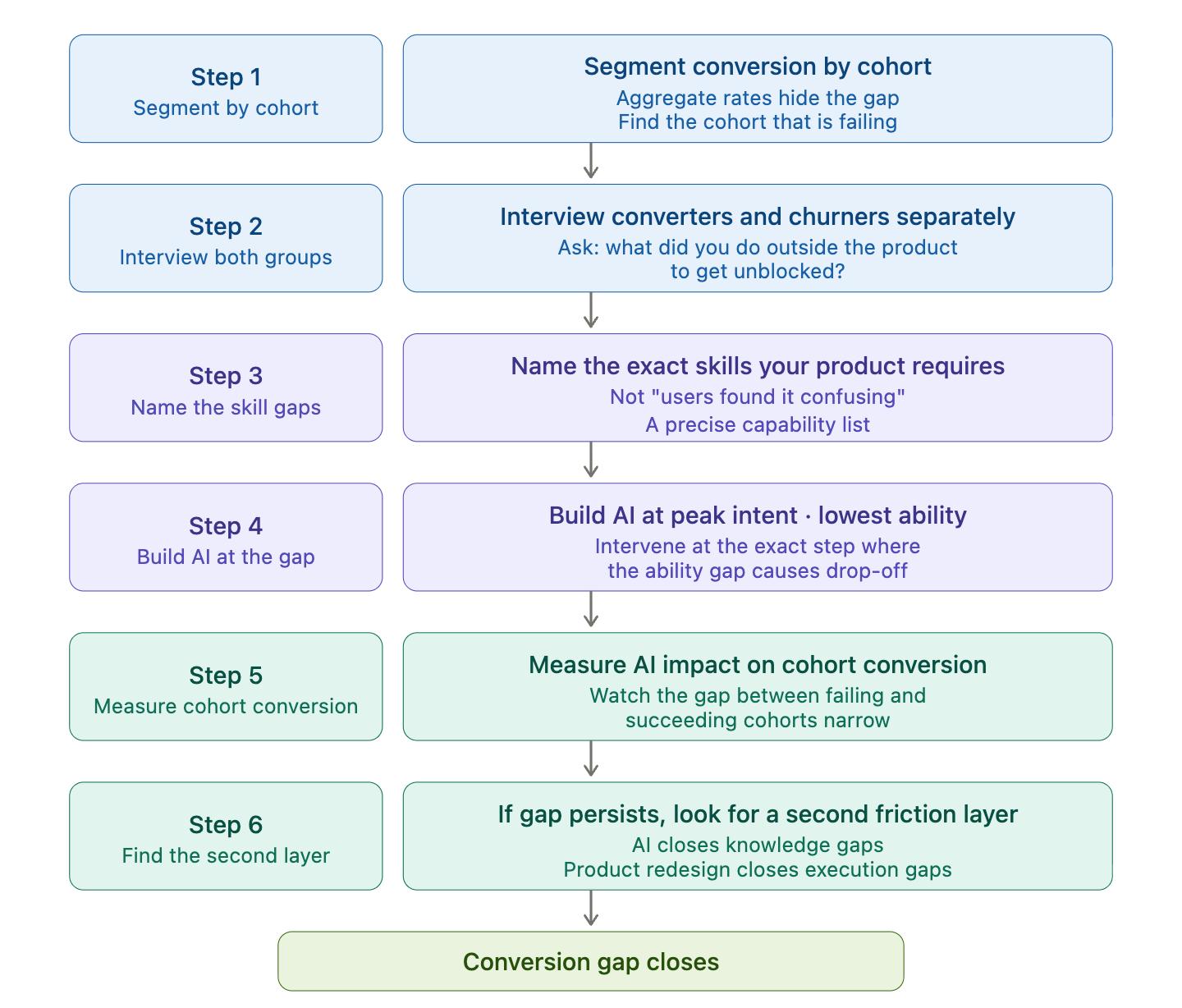

🔥 IGG Playbook: How to Diagnose and Fix a Conversion Problem Using AI

Most conversion problems are an ability gaps. This playbook walks through how to find yours and use AI to close it.

Step 1: Separate your conversion data by user cohort

Your overall conversion rate is hiding the real problem. Aggregate numbers flatten the difference between users who succeed and users who fail.

Segment conversion by acquisition channel, user tenure, and any proxy for prior domain knowledge

Look for a cohort that converts significantly below your average - that is your signal

Step 2: Interview the two populations - converters and churners separately

Do not just analyze the drop-off funnel. Talk to both groups and ask the same questions.

What did you do in your first session?

Where did you get stuck?

What did you do outside the product to get unblocked?

The answer to that last question is where your AI opportunity lives. If churners say “I gave up” and converters say “I watched YouTube tutorials” or “I asked my friend,” the product has an invisible prerequisite it never built.

Step 3: Name the specific skills your product silently requires

Turn the qualitative finding into a precise capability list. Not “users found it confusing” - but exactly what a user needs to know to complete their first meaningful action.

For Ancestry this was three things: interpreting historical records, tracing relationships across generations, and reconciling conflicting sources. Each one was a discrete skill gap that AI could address directly.

The more specific your list, the more targeted your AI intervention can be.

Step 4: Build AI at the moment of highest intent, lowest ability - not as a help feature

This is the most important tactical decision. Most teams build AI as a support layer - something users invoke when stuck.

Map the exact step in your new user journey where the ability gap causes drop-off

Build the AI intervention there, as the primary interface for that moment

The goal is not to answer questions. It is to get the user to their first meaningful outcome without them needing to develop expertise first

Step 5: Measure AI impact on cohort conversion

The wrong metric is AI usage rate or session length. The right metric is whether the cohort that was failing is now converting.

Instrument conversion separately for users who engaged with the AI vs. those who did not

Track repeat visits and paywall reach for the previously-failing cohort specifically

If AI is working, you will see the conversion gap between your two populations narrow — not just overall conversion tick up slightly

Step 6: If the AI closes the knowledge gap but conversion still lags, look for a second layer of friction

Ancestry needed two interventions, not one. The AI co-pilot closed the knowledge gap. Smart Search closed the execution gap. Neither alone was sufficient.

After shipping your AI intervention, re-run your cohort analysis. If the gap has narrowed but not closed, ask: what is still making the product hard to use for this audience even when they know what to do? That second layer is usually a product experience problem, not a knowledge problem and it requires a product solution, not a better AI prompt.

Want help on any product growth challenges you have?

Let’s talk